(Comments)

No coding tutorial today. Just sharing my top 10 deep learning experiences found recently that run on browsers.

Enjoy the ride, no coding experience needed.

A game where you are challenged to draw a something, e.g. a donut, and let the neural net guess what you are drawing. My first attempt gets 4 out of 6 right. Let's see how well you can draw.

Under the hood, the model takes sequences of strokes your draw on the canvas and feed into a combination of convolution layer and recurrent network. Finally, the class digits will be generated from the softmax output layer.

And here is the illustration of the structure of the model.

You may wonder where are those training data came from. You guessed it! It is a collection of 50 million drawings across 345 categories coming from people plays the game.

Another AI Experients from google build on the deeplearn.js library.

Teach machine to recognize your gesture and trigger events, whether it is a sound or gif. Be sure to train on enough samples and different angles, otherwise, the model will likely find it hard to generalize your gestures.

This experience built on TensorFire runs neural networks in the browser using WebGL which means it is GPU-accelerated. It allows you to compete with a computer in real time through your webcam.

Another demo from TensorFilre, GPU accelerated style transfer. It takes one of your photos and turns it into an astonishing piece of art.

If you are familiar with Keras library, you may already come across its demo for style transfer which computes two losses "content" and "style" when training the model. It takes really long to generate a decent image.

While this one running on your browser takes less than 10 seconds, make it even possible for videos.

This demo based on Google Cloud Vision API which means the browser sends a request to Google’s server. Kindly like when you use Google Image search to search for similar images.

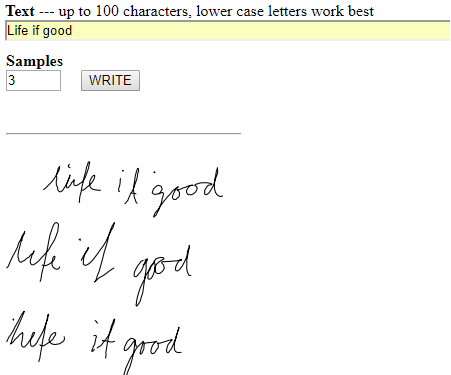

This demo uses LSTM recurrent neural networks for handwriting synthesis. the source code is available.

You sketch a cat, the model will generate the photo for you, very likely a creepy cat.

The demo talks to the backend server running TensorFlow model, the backend server run by itself or forward to Cloud ML hosted TensorFlow service run by Google.

The cat model is trained on 2k cat photo and automatically generated edges from cat photos. So it is "edge" to "photo". The model itself uses a conditional generative adversarial network (cGAN).

Check out the original paper if you are interested in implementation detail, it shows more example usages for cGAN like "map to aerial", "day to night" etc.

Another in-browser experience builds on the hardware accelerated TensorFire library let you auto-complete your sentences like Taylor Swift, Shakespeare and many more.

The original author's goal is not to make the result "better", but to make it "weirder".

The model is built on rnn-writter, to learn more, you can read the author's page.

Enjoy real-time piano performances created in the browser.

This model creates music in a language similar to MIDI itself, but with note-on and note-off events instead of explicit durations. So the model is capable of generating performances with more natural timing and dynamics.

Tinker with a neural network in your browser. Tweak the model by using different learning rate, activation function and more. Visualize the model as its training.

Let's have a sneak peek into the brain of the neural network.

Yet another Google AI experiment. The experiment itself might not be running any deep learning model, that is why it is not counted. But it is so cool that I cannot help but put it here.

It allows you to build your drum machine with daily sounds like dog bark and baby cry. The data is visualized using T-SNE, meaning similar sounds are grouped together, make it really easy to pick.

First, collect Thousands of everyday sounds audio files into a single array and create one large wave file from all of the audio samples to the audio sprite sheet. Then generate audio fingerprints and turn the fingerprints into a T-SNE map.

So that's it. Do you have any cool deep learning experience you want to share? Please leave a comment and let's continue the journey.

Share on Twitter Share on Facebook

Comments